MIDI stems from the idea that you strike a key on this keyboard over here and have all the information about that event travel down a cable into a synth over there, which then plays the right note. MIDI is the digital control of one electronic musical device by another, and because it’s digital, it can be recorded, edited and manipulated – that’s where the real fun begins.

CONTENTS

WHAT IS MIDI?

MIDI is one of the most creative technologies ever conceived. It makes a connection between different pieces of musical equipment that can be immensely empowering and terrifically useful. It took music production out of the hands of the professional sound engineer and put it into the laptops and devices of anyone with a desire to make music. It’s arguably the most significant development since the invention of tape recording and wholly redesigned how we approach the making of music. MIDI is what lets you write a symphony on your computer; it enables you to build beats, create soundtracks, find grooves and keep control over numerous hardware and software instruments, synthesizers and samplers. It can connect you to a world of sound, to endless possibilities available through your fingertips or your mouse. MIDI is marvellous.

WHAT DOES MIDI STAND FOR?

MIDI stands for “Musical Instrument Digital Interface” and was a practical solution to the problem of having to have lots of synthesizer keyboards within reach if you wanted to play different sounds. With MIDI, one “Master” keyboard could be plugged into many keyboards making the whole multi-synthesizer experience simpler. The designers of MIDI went far beyond that idea and built in a lot of functionality to attempt to capture the performance of music itself; that has enabled it to be revolutionary.

(Image: Official MIDI logo from the MIDI Manufacturer’s Association)

WHAT IS MIDI USED FOR?

MIDI is used to play one synth from another and record and arrange the data that passes between them, which is called “sequencing”.

Let’s unpack that a little. MIDI is a digital protocol; it’s a language that allows different electronic instruments to communicate with each other for the purposes of control. These might be messages such as “Note on” and “Note off” along with data on the pitch and how hard the note was struck (velocity). At a basic level, it’s precisely the sort of information you’ll find on a piece of written sheet music, and that’s a perfect way of understanding it. When talking about MIDI we are talking about a representation of notes and expression, like you’d find on a score, rather than a recording of the music itself. If you wanted to make a piece of music, you could play it on an instrument and capture that performance through a microphone. But if you write the music out as a score, you could get different musicians to play it with different instruments. You could change the timing, the emphasis, change the key it’s being played in or even change the notes entirely. That’s what MIDI is. MIDI is a sheet of music in digital form that enables it to be edited, changed and performed by all sorts of electronic musical devices.

WHAT IS A MIDI SEQUENCER OR “DAW”?

Once you start recording and manipulating the data, you’ll find that MIDI becomes a fantastic compositional tool. The device used for recording MIDI is called a Sequencer. This can be a hardware sequencer, often built into a synthesizer or keyboard, but it is more commonly and more comprehensively done with software on a computer, also often referred to as a “DAW” (Digital Audio Workstation). DAWs go beyond MIDI by integrating additional functionality for audio recording and processing, but we’ll stay focussed on the MIDI side of things:

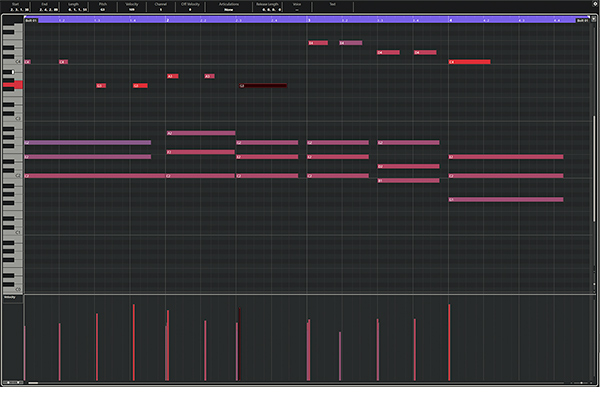

The software sequencer offers visual representations of the notes and other data generated by our performance and MIDI devices. This makes it super easy to edit and arrange. While a MIDI sequence can be visually represented by a sheet of music, and most software sequencers have the ability to do this, it is most commonly seen as blocks on an editor called a “Piano Roll”.

A Piano Roll is a grid where on the left, you have a piano keyboard on its side with high notes at the top and low notes at the bottom. Along the top is a timeline usually split into bars and beats. Each block is a note placed on the grid to show its pitch and the time it occurred. The width of the block is how long the note was held. With this simple editor, anyone with a mouse can enter notes, create patterns, copy and paste musical ideas and send them to play any sound of their choosing.

While MIDI can be about notes it can also be used for recording changes to synthesizer parameters. MIDI Control Change data is generated by the turning of knobs and moving of sliders on a synths front panel. This information can also be recorded and edited in a Sequencer enabling you to design tonal changes that fit your music. It also lets the knobs on your MIDI controller control the filter cutoff, envelopes, oscillators and other functions on your hardware or software synthesizers.

HOW DOES MIDI WORK?

MIDI consists of several uniquely defined events or commands that every MIDI device understands. When you press a key on a MIDI keyboard it generates a “Note on” event. This event contains the note number (pitch) and the velocity value. Any MIDI device which receives this event will sound the specified note with the specified velocity (assuming the device supports velocity, not all synths do). That note will be held on until you take your finger off the key which generates a “Note off” event that tells the other MIDI devices to stop playing that note.

At no point is “sound” being recorded when you are working with MIDI. As with our sheet music analogy you are recording information about the music; the pitch, the note length, the dynamics, not the sound of the music itself. This means that you can change the sound being played, edit the notes and rearrange the music to your heart’s content.

It would be good to define some of the MIDI devices we’re talking about and how they are physically connected to one another.

- MIDI Controller Keyboard – A piano-style electronic keyboard that generates MIDI data and has no sounds, synthesis or instrument engine of its own. It’s designed for playing other MIDI devices or for connecting to a computer for MIDI sequencing.

- MIDI Synthesizer/Workstation – A synthesizer instrument with a built-in MIDI keyboard that generates sounds that can be accessed over MIDI.

- Sound Module – A slightly archaic term that would describe a hardware box of electronic sounds with no keyboard and can only be played over MIDI. They can usually be racked up and have been largely replaced by the computer and virtual instruments.

- Desktop Module/Synth – A synthesizer or electronic sound generator without a keyboard in a desktop form factor that would be played via MIDI.

- Hardware Sequencer – A desktop unit designed for the recording, editing and playback of MIDI notes and data.

- Software Sequencer – Computer software designed for the recording, editing and playback of MIDI notes and data and is usually part of a Digital Audio Workstation (DAW).

- Virtual Instruments/Synthesizers, VSTis, Soft-Synths – Software sound sources created on computer as part of a sequencer or DAW.

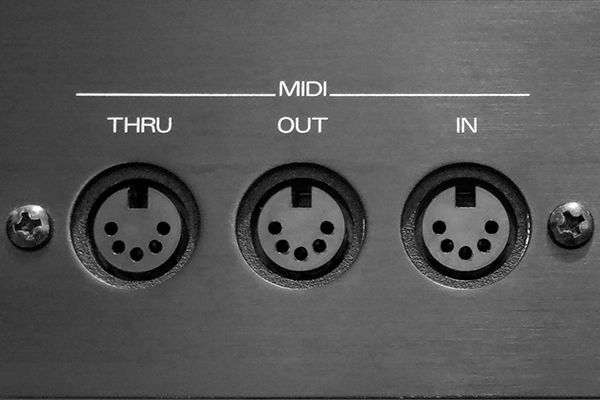

CONNECTING MIDI DEVICES: MIDI IN, OUT AND THRU

The MIDI connection is traditionally in the form of a 5-pin DIN cable. DIN was a popular audio format when MIDI was invented in the 1980s, but you’d be hard pushed to find it in use for anything other than MIDI these days. Recently synth manufacturers have been using a TRS Minijack format to reduce the size and cost of MIDI ports. MIDI can also be carried over USB directly to a computer bypassing the need for any other MIDI interface. Most MIDI keyboard controllers use USB-MIDI, which also has the benefit of providing power.

MIDI is a one-way connection, and so you need a separate port for MIDI In and MIDI Out. The MIDI Out of your controller keyboard would need to connect to the MIDI In of the synthesizer to play the sounds. If you wanted to record the Control Change data from the movement of the knobs of the synth, you would need to connect the MIDI Out on the synth to the MIDI In on the sequencer. USB-MIDI does the whole thing in both directions down the one cable.

Many synths have a MIDI Thru, which is a useful way of chaining different devices so that they can be accessed from a single MIDI controller keyboard. The MIDI Thru takes whatever is coming into the MIDI In and sends it back out again. Connect the MIDI Thru to the MIDI In on the next synth to send the MIDI from the controller keyboard onto it.

MIDI CHANNELS

Chaining synths and keyboards like this lets you play a lot of different sounds with a single press of a key. But wouldn’t it be helpful to be able to address each synth separately? The makers of MIDI thought of that and gave us 16 independent MIDI channels to use over a single MIDI connection. You could chain up 16 synths, sound modules and devices and have each one set to a different MIDI channel. You can switch channels to play only the sounds you want to play from a specific synth on your controller keyboard. Some synths and workstations are multitimbral, which means they support playing more than one sound at a time, each sound being on a different MIDI channel.

So, to recap. MIDI is a digital connection between MIDI devices, like synths, keyboards and your computer, sending and receiving information about notes and parameters. That information can be used to play one synth from another, or it can be recorded and edited in a sequencer. At no time is sound recorded, so you are free to change all the instruments and synths playing the sounds and edit all the notes and arrangements. MIDI is marvellous.

WHAT IS A MIDI FILE?

The sort of data that’s generated with MIDI, the notes, the controller information and so on, is very easily transferable. MIDI can be saved and exported from a sequencer as a MIDI File which can then be loaded and opened by another sequencer. This way you can write a MIDI composition and send it to a friend who could then play it through their own MIDI gear. And that’s an important point: MIDI Files do not contain any sound, they contain details on what notes were played and how parameters were changed. So when your friend plays the file it has to be directed to their MIDI sound machines which might have radically different sounds to the ones you used.

MIDI SETUP EXAMPLES

MIDI setups can be as simple as connecting two MIDI devices, so you can play and control both devices from either one – or as complex as a whole MIDI studio with a MIDI controller keyboard and computer at the center, controlling a host of connected MIDI devices. Here’s a couple of setup examples:

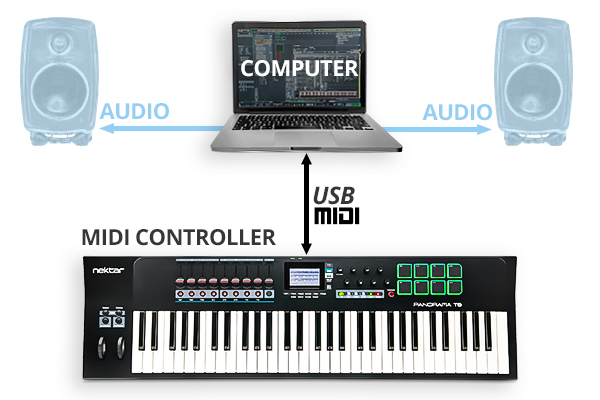

COMPUTER + MIDI-USB

One of the most straightforward and versatile MIDI setups is a MIDI controller keyboard plugged via USB into a laptop or desktop computer. A computer can run a vast array of virtual instruments from orchestras to guitars, acid bass to screaming leads, pianos to drum kits, sound effects and sampled loops. All of them can be played from your single MIDI controller keyboard, and you may have knobs or sliders on it that can be mapped to the controls giving a hardware feel to a software synth.

Using the keyboard and your mouse, you can compose a symphony, write melodies, tap in some beats, experiment with patterns of notes, generate chords, produce drum tracks and develop an arrangement in a multitude of versions. You can draw in controller data to automate the filter cutoff, deepen reverbs or record knob movements from your controller to modulate parameters. When you’re happy with your music, you can mix it down to audio within your DAW software and upload it to Spotify or Bandcamp without using anything other than that keyboard, your computer and the power of MIDI.

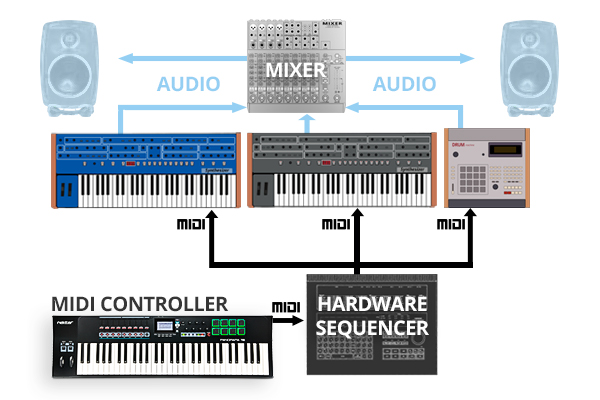

HARDWARE SEQUENCER + SYNTHS + DRUM MACHINE

Computers can make MIDI feel very transparent, so when you apply the same idea to hardware MIDI devices, it can appear a little clunky in comparison. It’s working in precisely the same way but with visible cables and physical connections. With a MIDI keyboard, a hardware sequencer, and a couple of synthesizers, you can build tracks, chain patterns, and arrange for recording or live performance.

The sequencer would be the hub of the operation, and the MIDI keyboard would plug directly into it from MIDI Out to MIDI In. The MIDI out of the sequencer would go to the two synthesizers and drum machine, either separately if the sequencer has 3 MIDI Out ports, or if not, the MIDI would go to the first synth and then use a MIDI Thru to go to the second and so on. If neither synth has a MIDI Thru, you’ll need a simple MIDI splitter box. Each synth would need to be set to its own MIDI channel to sequence them separately.

The flow of MIDI data would then be from your keyboard into the sequencer for recording and then routing out to the two synthesizers. You can usually edit and add notes directly into the sequencer via its own interface. You can often add controller data for modulating parts of the synth in time to the notes, parts and patterns you’re creating. The MIDI keyboard is not essential in that respect, but it makes things a lot easier.

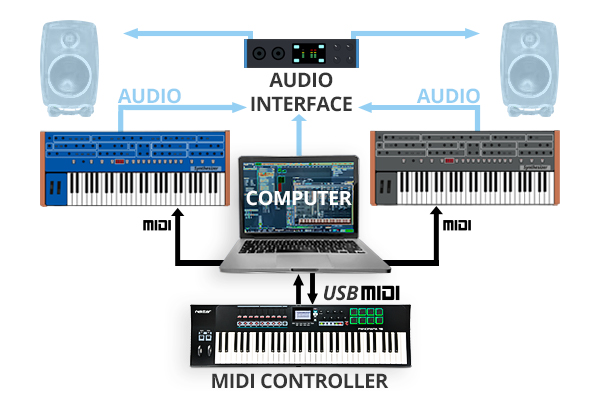

HYBRID SETUP: SOFTWARE + HARDWARE SYNTHS

What’s increasingly common these days is people combining software sequencing, virtual instruments and hardware synthesizers. So rather than a hardware sequencer, you have your computer sitting in the middle of all your gear. Your external synths might connect to the computer via USB, or you may need a USB MIDI interface to provide you with enough ports. With a virtual instrument, you load it up, and your DAW automatically creates a track for it and is already labelled up ready to go. MIDI isn’t intelligent enough (yet – see MIDI 2.0) to recognise hardware synths, and so you’ll have to tell your DAW which tracks to use for external gear and set up the channels. If your setup is more or less permanent, it doesn’t take much to set up studio templates so that it’s all ready to go whenever you start a new project.

A hybrid setup can get interesting because you can use your computer’s DAW software as a mixer for all your external gear. With a suitable audio interface, you can plug the audio output of each synth into your DAW and create a channel for them in the mixer. This makes it much easier to mix everything down inside the computer once you’re happy with your song. However, external mixers still very much have their place for this sort of thing.

WHAT ARE MIDI MESSAGES?

We’ve talked very generally about MIDI events and controller data, but what can MIDI actually handle? You have 6 main events to do with the playing of the instrument, and these are supported by a 7th which contains a whole bunch of “Control Change” data that acts on the device itself. Each MIDI message includes 3 things: The first announces what it is, followed by two relevant pieces of data. So, an example could be:

- Hello, I’m playing a note

- It is this note

- And this hard

Those 7 main events are:

- Note off – note number – velocity

- Note on – note number – velocity

- Polyphonic Key pressure/aftertouch – note number – pressure value

- Control Change – controller number – controller value

- Program change – Program number – none

- Channel pressure/aftertouch – pressure value – none

- Pitch Bend – MSB – LSB (two numbers that describe the value up or down from the centre value of 8192)

AFTERTOUCH: CHANNEL AND POLY PRESSURE

There are two pressure/aftertouch entries. “Channel pressure” is when the pressure applied to the keys affects all the sound generated, just like pitch bend and the modulation wheel does. It’s the most common and only requires a single sensor in the keyboard to work. “Poly” or “Key” pressure is for when the pressure is registered individually per note, requiring a sensor on every key or pad. Poly pressure is supported by Nektar’s Aura pad controller and the pads on Panorama T4/T6 for example (the T-series keybed offers channel pressure). Poly pressure is occasionally found in synthesizers, such as the classic Yamaha CS-80 and more recent ASM Hydrasynth. It tends to be available for MIDI controllers as part of the MPE (MIDI Polyphonic Expression) protocol found in specialist MIDI controllers like the ExpressiveE Osmose or Linnstrument.

MIDI CONTROL CHANGE MESSAGES (CC#)

The Control Change event offers up to 120 assignable controls that can be mapped to any parameter within your MIDI instrument. It’s what enables you to use the Mod wheel, knobs and sliders on your MIDI controller to change the filter cutoff, envelope and modulation depth in the software or hardware synthesizer you are playing. A few of these are predefined to make MIDI control a bit more automatic.

The Control Change messages are numbered and are known as Continuous Controller numbers or CC#. So, CC#1 is assigned to the Modulation Wheel, so any MIDI manufacturer will set the LFO depth on the pitch of the sound to be controlled by CC#1. CC#7 is Volume; CC #10 is Pan; CC #64 is the Sustain Pedal. For synthesis controls, CC#71 and CC#74 are defined for Filter Resonance and Filter Cutoff, respectively, but the convention is not always followed as synthesizers don’t always share the same parameters. Any of the CC# can be used to address different parameters within a synth; you just have to match them up. Software synths and DAWs tend to bypass any confusion by offering up a method of quickly mapping hardware controls to software parameters. While mapping controls from a MIDI controller to a synthesizer works well, especially with Nektar DAW integration or Nektarine, it can be laborious and frustrating with some gear and is one of the main issues MIDI 2.0 tries to tackle.

Seven additional Channel Mode messages sit after the 120 CC# for special messages that do things like reset all controllers, kill notes, turn local mode on and off and set mono or poly modes. MIDI can also handle some System Messages which are not channel-specific like tempo, stop/start and the position of a song pointer in a sequencer. Lastly, there are System Exclusive messages that contain non-standard data for a specific MIDI device.

Most MIDI Controllers allow you to make full use of MIDI messages and control changes over all 16 MIDI channels. Every hardware synthesizer has a published MIDI Specification, so you can set your controller up correctly to take complete control of all the parameters on offer. Here is an overview of the MIDI #CC numbers and their assigned functions:

| #CC NUMBER | FUNCTION | NOTES |

|---|---|---|

| 0 | Bank Select | |

| 1 | Modulation | |

| 2 | Breath Controller | |

| 3 | (Undefined) | |

| 4 | Foot Controller | |

| 5 | Portamento Time | |

| 6 | Data Entry MSB | (MSB = Most Significant Byte) |

| 7 | Channel Volume | (Formerly Main Volume) |

| 8 | Balance | |

| 9 | (Undefined) | |

| 10 | Pan | |

| 11 | Expression | |

| 12 | FX Control 1 | |

| 13 | FX Control 2 | |

| 14-15 | (Undefined) | |

| 16-19 | General Purpose | (#’s 1-4) |

| 20-31 | (Undefined) | |

| 32-63 | LSB for values 0-31 | (LSB = Least Significant Byte) |

| 64 | Damper pedal | (Sustain) |

| 65 | Portamento On/Off | |

| 66 | Sostenuto | |

| 67 | Soft Pedal | |

| 68 | Legato Footswitch | |

| 69 | Hold 2 | |

| 70 | Sound Controller 1 | (Default: Sound Variation) |

| 71 | Sound Controller 2 | (Default: Timbre/Harmonic Intensity) |

| 72 | Sound Controller 3 | (Default: Release Time) |

| 73 | Sound Controller 4 | (Default: Attack Time) |

| 74 | Sound Controller 5 | (Default: Brightness) |

| 75-79 | Sound Controller 6-10 | (No Defaults) |

| 80-83 | General Purpose | (#’s 5-8) |

| 84 | Portamento | |

| 85-90 | (Undefined) | |

| 91 | FX 1 Depth | (Formerly: External Effects Depth) |

| 92 | FX 2 Depth | (Formerly: Tremolo Depth) |

| 93 | FX 3 Depth | (Formerly: Chorus Depth) |

| 94 | FX 4 Depth | (Formerly: Celeste (Detune) Depth) |

| 95 | FX 5 Depth | (Formerly: Phaser Depth) |

| 96 | Data Increment | |

| 97 | Data Decrement | |

| 98 | NRPN LSB | (NRPN = Non-Registered Parameter Number) |

| 99 | NRPN MSB | |

| 100 | RPN LSB | (RPN = Registered Parameter Number) |

| 101 | RPN MSB | |

| 102-119 | (Undefined) | |

| 120-127 | Reserved | (For Channel Mode Messages) |

(Source: midi.org)

WHAT IS MIDI 2.0?

MIDI is powerful, but it’s also not very intelligent and is massively overdue for an update. The main problems are to do with resolution and conversation. MIDI can handle 128 values, but this can sound a little “steppy” when changing parameters on synthesisers (eg. pitch or filter cutoff). By conversation we mean that MIDI isn’t asking any questions. It sends out information in response to physical movements, and another device receives them and blindly follows the instructions. MIDI doesn’t know what either device is, doesn’t know if it worked or not and doesn’t really care. MIDI 2.0 hopes to increase the resolution and make MIDI more intelligent and perhaps more sympathetic.

How awesome would it be to plug a MIDI cable between two devices and have both automatically find each other, share information about their parameters and set themselves up ready to play? This is what MIDI 2.0 calls MIDI Capability Inquiry (MIDI-CI) and Property Exchange. It’s an exciting protocol of negotiation and exchanging information that are core aspects of the new specification. Common Profiles dictate a set of rules that every device can follow so that the controls on one device will control similar controls on the other without any setup or mapping required. The brilliant thing is while these additional protocols exist and while the data resolution is being extended if it doesn’t work or encounters an unsupported device, it will drop to MIDI 1.0 rules and work as it always has.

MIDI’s strength has always been that it became a true standard – and adoption of any “update” to this will take some time (so it is great, that MIDI 2.0 is backwards compatible).

But several technical issues will have to be addressed along the way. Starting with the fact, that legacy DIN and mini TRS connectors are likely not adequate for the new protocol. Also,many hardware parts being used currently are unlikely to be able to take full advantage of MIDI 2.0 – even with just a doubling in resolution. And while USB is able to handle MIDI 2.0, there currently are no transport/class drivers available in any operating system yet. So it is still early days! Nektar is of course committed to MIDI 2.0 and we will be looking to take advantage of the new opportunities: We strive to offer even better interoperability to our users, than we provide today.